ピックアップ

-

記者の眼

ZOZOマリンスタジアムに現れたスマートゴミ箱、観客動員増続く千葉ロッテの追い風に

-

技術者の働き方 ホントの話

上司は気付いていない、新入社員が「報連相」できない理由

-

-

日清食品、「デジタル武装」の全貌

3年でセキュリティー投資額は2倍以上に、プロ人材を集め「先守後攻」貫く日清食品

-

ニュース解説

開発時のCO2排出量は「準備段階」含む、NTT系・NEC・富士通・日立がルール策定

-

-

月面経済圏

トヨタ月探査車用タイヤが第2世代に、ブリヂストンがパンクしない技術を転用

-

GWスペシャル 2024

ビジネスメールを最低限の指示でパパッと、5つのAIサービスによる作文の「出来」

-

-

ニュース解説

航空機の生産技術に強み、川崎重工の一発穴あけロボをボーイングとエアバスが視察

-

ニュース解説

地味だけどすごいADEKA、DRAMに続き先端ロジック材料にも照準

-

ニュース

-

IHI子会社で燃費の試験データ改ざん、船舶用エンジンの9割で

IHIは2024年4月24日、子会社で船舶・陸上用のエンジンを製造するIHI原動機(東京・千代田)が、船舶用のエンジンなどで燃費に関わる試験データを長年にわたり改ざんし、出荷…

-

ファナック、ワイヤ放電加工機の試験不正疑いで特別調査委員会を立ち上げ

ファナックは2024年4月24日、同社のワイヤ放電加工機「FANUC ROBOCUT」について、欧州のEMC(電磁両立性)指令の整合規格に関して試験不正の疑いがあると発表した…

-

三菱ケミカルGが月面探査車向けの樹脂材、構造設計に素材の知見生かす

三菱ケミカルグループは、月面探査車向けに軽量で高い剛性を持つ樹脂部材を開発した。材料選定にとどまらず、構造設計から射出成型まで手掛け、従来品と比べて耐衝撃性を向上させた。宇宙…

トピックス

-

日経クロステック編集部おススメ

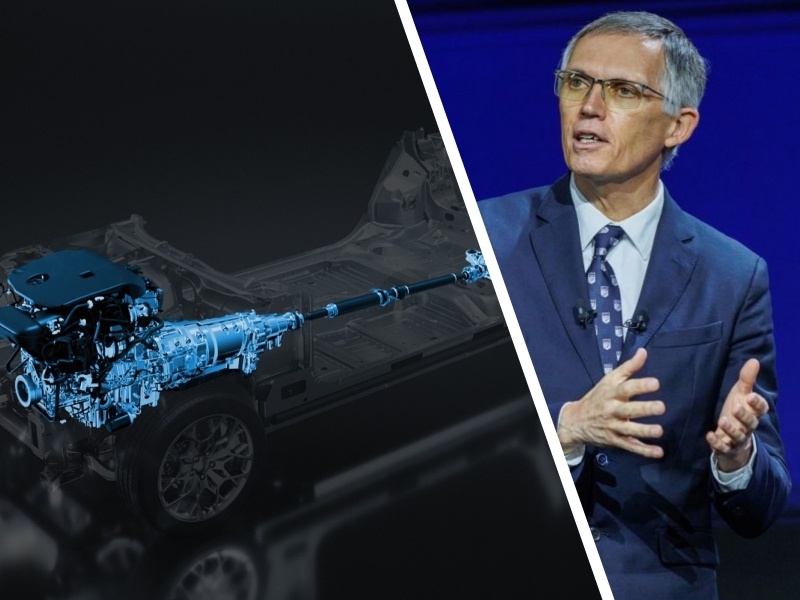

ニデックの新経営体制、世界をどう攻めるか

-

情報技術マンスリー・アーカイブス

IT大手2024年春闘/待ったなし!物流2024年問題/みずほ、なるか4度目の正直

-

先端技術マンスリー・アーカイブス

「EV優先」設計でエンジンに逃げ道/難関だらけのEUV露光装置/寒暖差270℃を耐えたSLIM、月面活動に活路

-

建設業界マンスリー・アーカイブス

日本橋の首都高地下化、大成建設が受注/紀の川ICの変状原因は盛り土の透水性低下/監理技術者の専任緩和へ

-

知ったかぶりをする前に読んでおきたい「IT基本用語辞典」

-

仕事に役立つ! IT・電機・製造・建築・土木の用語集&Excel・Linux・ネットワークのコマンド集

-

ぜひお申し込みください「日経クロステック登録会員(無料)」の特典を紹介

-

有料会員は全記事読み放題!見たい記事が必ず探せる「日経クロステック活用ガイド」

特設サイト

日経クロステック/技術メディアの本

-

図解 木造住宅トラブルワースト20+3 「雨漏り事故」「構造事故」の事例から学ぶ原因と対策

木造住宅のトラブルを「雨漏りワースト20」と「構造ワースト3」として類型化。原因と対策と損害額が...

-

東京大改造2030 都心の景色を変える100の巨大プロジェクト

建築や土木の専門記者が取材した、一歩踏み込んだ東京の再開発プロジェクトを豊富な写真や図面で紹介し...

-

一級建築士矩子と考える危ないデザイン

住宅や建築物で起こる身近な事故と背景、効果的な防止策を人気建築漫画「一級建築士矩子の設計思考」の...

-

ソフトバンク もう一つの顔 成長をけん引する課題解決のプロ集団

ソフトバンクにはモバイルキャリア事業以外のもう一つの顔が存在する。本書ではキーパーソンへのインタ...

-

検証 能登半島地震 首都直下・南海トラフ 巨大地震が今起こったら

地震発生直後に現地で撮影した被害写真を多数掲載。専門家や施設関係者への取材から見えてきた建築・土...

-

次世代自動車2024

【4月30日まで早割実施中!】日経Automotiveが、激動する自動車業界の1年を振り返り、今...

注目のイベント

-

プラチナフォーラム 2024 Spring

2024年 4月 26日(金) 13:00~17:00(予定)

-

日経クロステックNEXT 関西 2024

2024年5月16日(木)~5月17日(金)

-

日経ビジネスCEOカウンシル

2024年5月16日(木)17:00~19:50

-

VUCA時代に勝ち残る戦略的サプライチェーン構築に向けて

2024年 5月 24 日(金) 10:00~16:20

-

人手不足を乗り越える 日本の産業界成長のシナリオ2024

2024年5月30日(木)10:20~17:45

-

キャリア・オーナーシップが社会を変える

2024年6月3日(月)~6月5日(水)

-

DX Insight 2024 Summer

2024年6月4日(火)、5日(水)

-

WOMAN EXPO 2024

2024年6月8日(土)10:00~17:30

-

デジタル立国ジャパン2024

2024年6月10日(月)、11日(火)

-

DIGITAL Foresight 2024 Summer

2024年6月13日(木)~8月8日(木)16:00~17:00 ※毎週火・木曜開催予定

注目記事

注目記事

特設サイトアーカイブ

日経クロステック Special

What's New!

IT

- 生成AIとサイバー攻撃/重要な4つの観点

- PayPay銀行、新時代の銀行インフラ

- “新しい働き方”にふさわしいPCとは?

- システム運用を劇的に効率化するには?

- 【生成AI事例】デジタルで現場をDX化

- 「クラウド時代のあるべき運用」を熱く議論

- 業務や役割に応じた「社員に最適なPC」

- 生成AI活用へ「待ったなし」成功の秘訣

- 目指すは相互に行き来できるマルチクラウド

- 「稼ぐ力」を劇的に高めるROIC経営

- 大企業にもキントーンの導入が進む理由

- 「サーバ―」部門満足度トップ企業に訊く

- 医療セキュリティ対策の鍵はSaaS化?

- 製造業DX「データドリブン経営成功のシナリオとは」

- 欧州トップ企業語る日本のセキュリティー

- 大教大とマウスパソコン教室の在り方を研究

- 最新の「ポスト認証攻撃」をいかに防ぐか?

- NTTドコモ支援の実践型教育プログラム

- ビジネスPC、ITデバイス購買DXを推進

- ジェイテクトエレクトロニクスのDX事例

- 生成AIの活用の鍵は「内製とアジャイル」

- AIと自動化でエンジニアの能力を解き放て

- NTTデータに優秀なデジタル人財が集まる理由

- 専門家が斬る日本の意識と対策の現状は?

- 神田れいみと学ぶ「ネットワーク活用白書」

- ゼロトラスト成功の秘訣を神田れいみと探る

- オリックス銀行×富士通時田社長 特別鼎談

- ERPプロジェクト≫IT人財の必須条件は

- マネージドサービスが安価に利用できる理由

- 脱レガシー案件≫SIerに必要な人財像は

- DXや生成AI活用に挑む大阪府

- 3段階で考える、DXで企業力を高める方法

- イノベーションの起爆剤

- 大規模プロジェクトでPMが注意すべき点は

- ビジネス戦略と連動したデータマネジメント

- 守りながら攻める“製造DX”の方法論とは

- ランサムウエアから診療データはこう守る

- 最新サーバーに学ぶ熱設計の最前線

- PC管理の課題を課題をまるごと解決

- 日本語に強い「和製生成AIモデル」が誕生

- 動画解説>生成AIからDX変革まで

- 大阪・名古屋エリアのDXが注目される理由

- 最適なネットワークを早く安く簡単に実現!

- セキュリティ×スキルUP≫JSOLの提案

- 力点は「未来予測」へ:データ利活用の勘所

- 生成AI活用でSAP BTPの価値が進化

- ServiceNowでDXを加速≫方法は

- SAPプロジェクトの全体像をいかに描くか

- 先端教育の現場で活躍する大学副学長が使い続けるレッツノート

- DX時代、IT人財/組織の“理想像”は?